April 21, 2026 /SemiMedia/ — Samsung Electronics, a South Korea-based semiconductor and memory supplier, is aiming to produce early samples of its next-generation HBM4E chips as soon as May, with shipments to Nvidia planned after internal testing, according to industry sources.

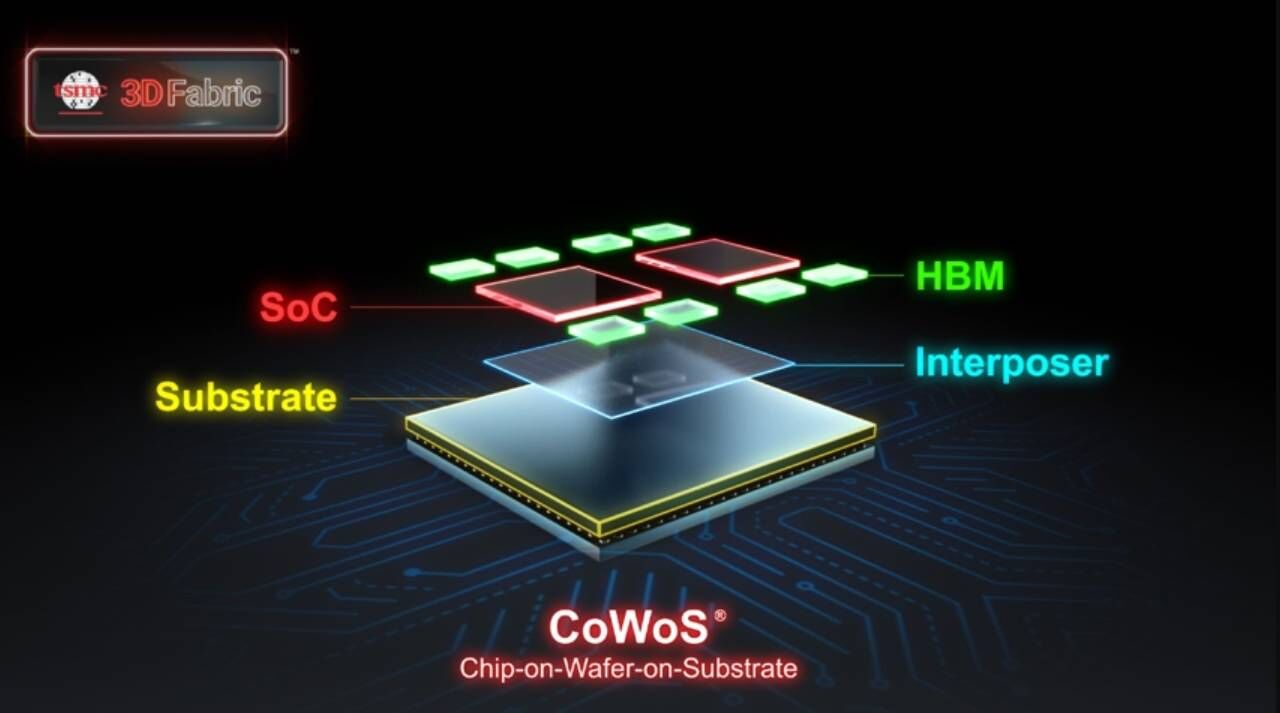

High bandwidth memory has become a key part of AI systems, and suppliers are moving quickly to keep up with demand. Samsung is stepping up work on its seventh-generation HBM products, focusing on meeting performance targets before sending samples to customers.

The company’s foundry unit is expected to produce the logic dies for HBM4E around mid-May. These will then be combined with DRAM by its memory division. The finished samples will go through internal checks before being delivered to Nvidia for further validation.

Samsung showed a version of its HBM4E at GTC earlier this year, but industry sources said the chip was closer to a demo unit than a production-ready product. The new memory is expected to reach speeds of up to 16 Gbps per pin, with total bandwidth around 4.0 TB/s, higher than HBM4.

For manufacturing, Samsung is likely to use a 4nm process for the logic part, while the DRAM will be built on a 10nm-class node. The company is pushing to secure an early lead in HBM4 production.

Rival SK hynix is also moving ahead with its own HBM4E plans, using advanced process technologies as competition in AI memory intensifies.

Nvidia’s next-generation Vera Rubin platform, which is expected to use HBM4 and HBM4E, has seen some changes in its rollout schedule. Samsung is moving faster this time, aiming to avoid falling behind as it did in the HBM3E market.

All Comments (0)